Anthropic Admits Errors Caused Claude Code Decline

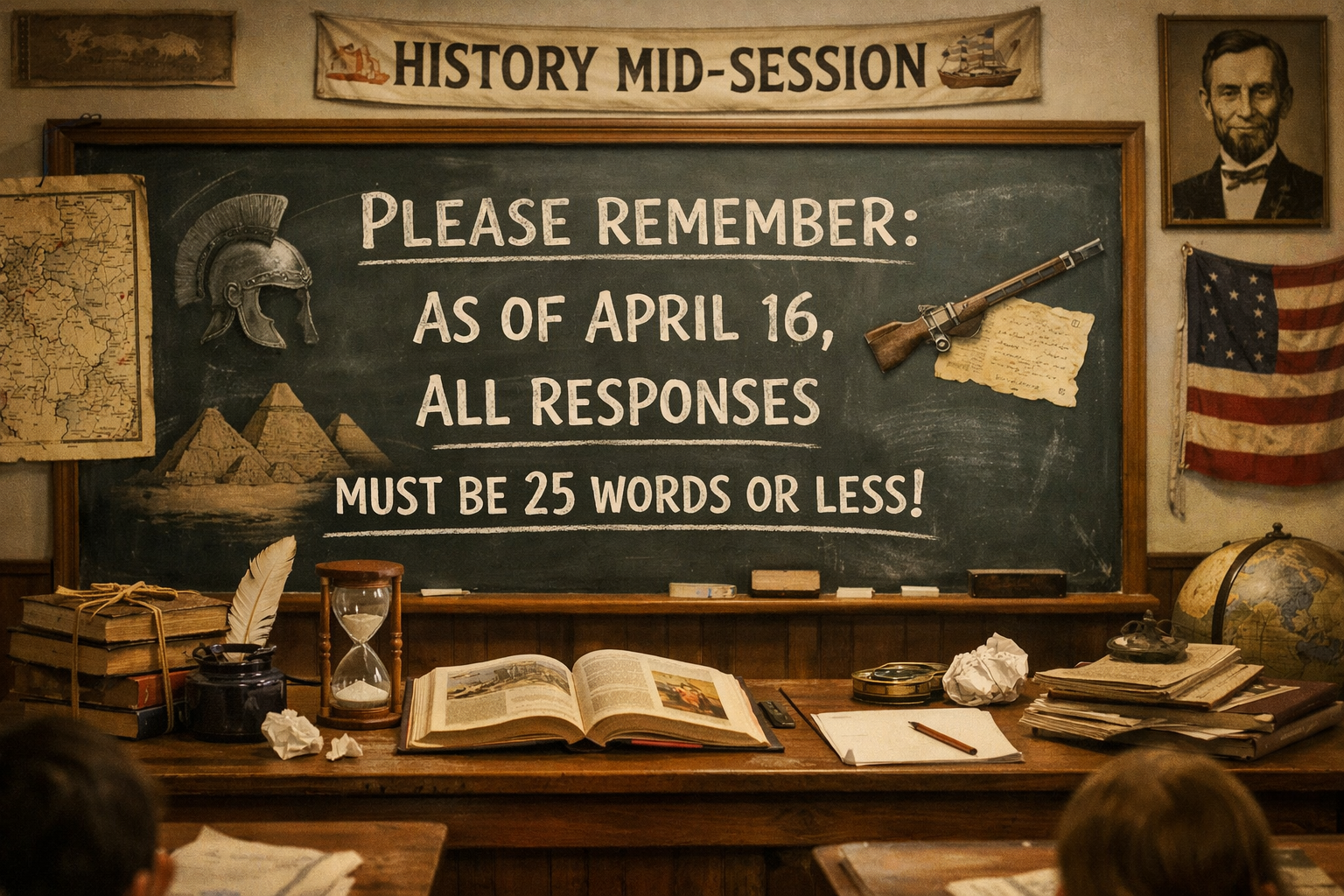

Anthropic has publicly acknowledged that engineering missteps caused a significant month-long decline in Claude Code’s performance, confirming widespread user complaints after weeks of frustration and subscription cancellations. In a post published to its engineering blog on Thursday, the company traced the problems to three distinct changes: a March 4 reduction in Claude Code’s default reasoning effort from “high” to “medium,” a March 26 bug that caused the model to continuously discard its own reasoning history mid-session, and an April 16 system prompt instruction capping responses at 25 words between tool calls. All three issues were resolved as of April 20, with the API unaffected throughout.

The Three Engineering Failures

The first change, rolled out on March 4, reduced Claude Code’s default reasoning effort from “high” to “medium” to cut latency, a tradeoff the company said in the blogpost was the wrong one. Anthropic reverted this change on April 7 after users told them they’d prefer to default to higher intelligence and opt into lower effort for simple tasks. The adjustment affected how much computational effort the model devoted to reasoning tasks, with immediate consequences for output quality.

The second change, shipped on March 26, contained a bug that caused the model to continuously discard its own reasoning history mid-session, making it appear forgetful and erratic, and draining users’ usage limits faster than expected. This was fixed April 10 for Sonnet 4.6 and Opus 4.6. The caching error meant Claude progressively lost context about its own decisions, leading to repetitive behavior and unexpected tool choices.

The third issue, introduced on April 16, added a system prompt instruction capping the model’s responses at 25 words between tool calls, a change Anthropic said measurably hurt coding quality before it was reverted four days later. Later testing with a broader eval suite revealed a 3 percent quality drop. The change was intended to reduce verbosity in the newly released Opus 4.7 model but had unintended consequences for coding performance.

User Backlash and Data-Driven Complaints

The performance decline sparked intense user frustration across multiple platforms. One of the most detailed public complaints originated as a GitHub issue filed by Stella Laurenzo on April 2, 2026, whose LinkedIn profile identifies her as Senior Director in AMD’s AI group, who wrote that Claude Code had regressed to the point that it could not be trusted for complex engineering work, then backed that claim with a sprawling analysis of 6,852 Claude Code session files, 17,871 thinking blocks and 234,760 tool calls.

Muratcan Koylan, a member of technical staff at Sully.ai, said in a post on X: “The frustrating part is that the Claude Code team, along with people deep in AI psychosis, have been gaslighting anyone who raises concerns about Claude Code’s recent issues. When you’re paying a lot of money for a product and it actually makes your job harder, to the point where people make you start questioning the quality of your own work, it really becomes a problem.”

Analyses from coding security company Veracode found that Claude Opus 4.7, Anthropic’s newest Claude model, which launched on April 16, introduced a vulnerability in 52% of coding tasks tested, up from 51% for Opus 4.1 and 50% for the lower-cost Claude Sonnet 4.5, while Veracode found OpenAI’s models performed notably better, introducing vulnerabilities in around 30% of tasks. Dave Kennedy, CEO of cybersecurity firm TrustedSec and a former U.S. Marine Corps intelligence officer, told Forbes his team had measured a 47% drop in Claude’s code quality, tracking defects, security issues, and task completion rates.

Company Response and Remediation

The company acknowledged users’ frustration with the tool, saying: “This isn’t the experience users should expect from Claude Code,” and promised greater transparency around changes to Claude Code in the future. On April 23, the company reset usage limits for all subscribers.

Anthropic also set up the X account @ClaudeDevs to communicate product decisions more transparently. The move comes after criticism that the company had not been sufficiently candid about changes affecting user experience, particularly given Anthropic’s reputation for emphasizing transparency and user alignment.

Despite the public acknowledgment and fixes, some users remain skeptical. In response to the newest post from Anthropic, Kennedy said: “I’m glad they are trying to address this, but a month to get this out is crummy.”

Broader Context and Pricing Pressure

The performance issues occurred during a period of rapid growth and infrastructure challenges for Anthropic. Anthropic’s head of growth recently acknowledged that the existing Pro and Max plans weren’t built for current agentic workloads since they were created before compute-intensive tools like Claude Code existed, and the company even briefly tested removing Claude Code access for new Pro subscribers but reversed course after backlash.

The current case reinforces a pattern: what users perceive as model regressions often turns out to be changes in the tooling layer or infrastructure rather than the models themselves. This distinction is crucial, as the API and underlying inference layer remained unaffected while the user-facing product experienced degradation.

Key Facts

- Three separate engineering changes between March 4 and April 16, 2026, caused Claude Code performance decline

- All issues were resolved as of April 20, 2026; API remained unaffected throughout

- Anthropic reset usage limits for all subscribers on April 23, 2026

- Security testing showed Claude Opus 4.7 introduced vulnerabilities in 52% of coding tasks

- One cybersecurity CEO measured a 47% drop in Claude’s code quality

- Anthropic launched @ClaudeDevs X account for improved transparency

Sources

- Anthropic explains Claude Code’s recent performance decline after weeks of user backlash – Fortune

- Anthropic admits it dumbed down Claude with ‘upgrades’ – The Register

- Is Anthropic ‘nerfing’ Claude? Users increasingly report performance degradation – VentureBeat

- Anthropic confirms Claude Code problems and promises stricter quality controls – The Decoder

Sources

- Anthropic explains Claude Code’s recent performance decline after weeks of user backlash | Fortune

- Anthropic admits it dumbed down Claude with ‘úpgrades’ • The Register

- Anthropic confirms Claude Code problems and promises stricter quality controls

- Is Anthropic ‘nerfing’ Claude? Users increasingly report performance degradation as leaders push back | VentureBeat